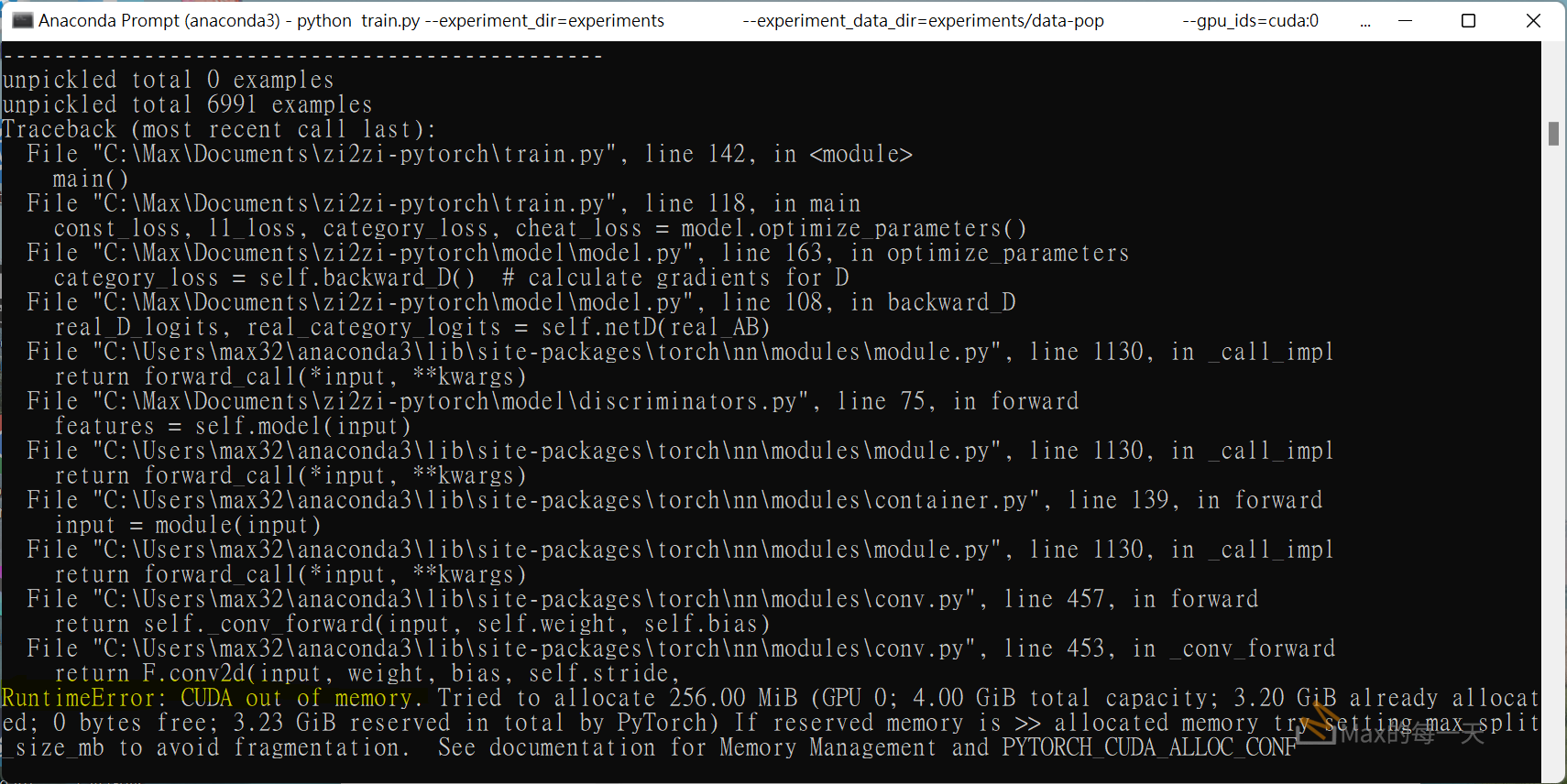

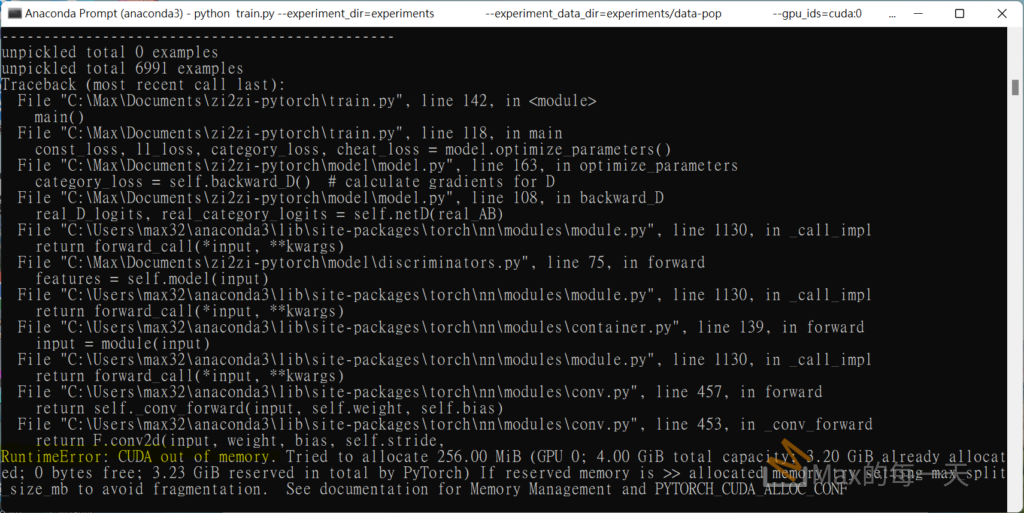

在 colab 上正常可以跑在程式, 搬到弱弱的有GPU 的筆電上執行, 會遇到記憶體不足的問題. 幾乎大家都會遇到, pytorch 官方討論串:

https://github.com/pytorch/pytorch/issues/16417

stackoverflow 討論串:

How to solve ‘ CUDA out of memory. Tried to allocate xxx MiB’ in pytorch?

https://stackoverflow.com/questions/61234957/how-to-solve-cuda-out-of-memory-tried-to-allocate-xxx-mib-in-pytorch

最快解決辦法, 是調低batch_size , 例如設為32.

Try reducing your batch_size (ex. 32). This can happen because your GPU memory can’t hold all your images for a single epoch.